Using Fitness Testing Data to Make Impact

Fitness testing is only as good as how you communicate it.

Fitness testing has become a cornerstone of physical performance in football, with practitioners routinely collecting large volumes of data across sprinting, jumping, strength, and physiological measures, often supported by increasingly sophisticated technologies and testing protocols that allow for precise and repeated assessments over time.

But the reality in applied environments is that the challenge is no longer centred around data collection.

It is centred around communication.

A recent study by Asimakidis and colleagues, involving 145 elite football practitioners, explored how fitness testing data is actually used, interpreted, and preferred in real world settings, and the findings highlight a clear and important gap between what is typically reported and what is genuinely useful for decision making in practice

Better data does not automatically lead to better decisions, but better communication often does.

Tracking Change Over Time Drives Decision Making

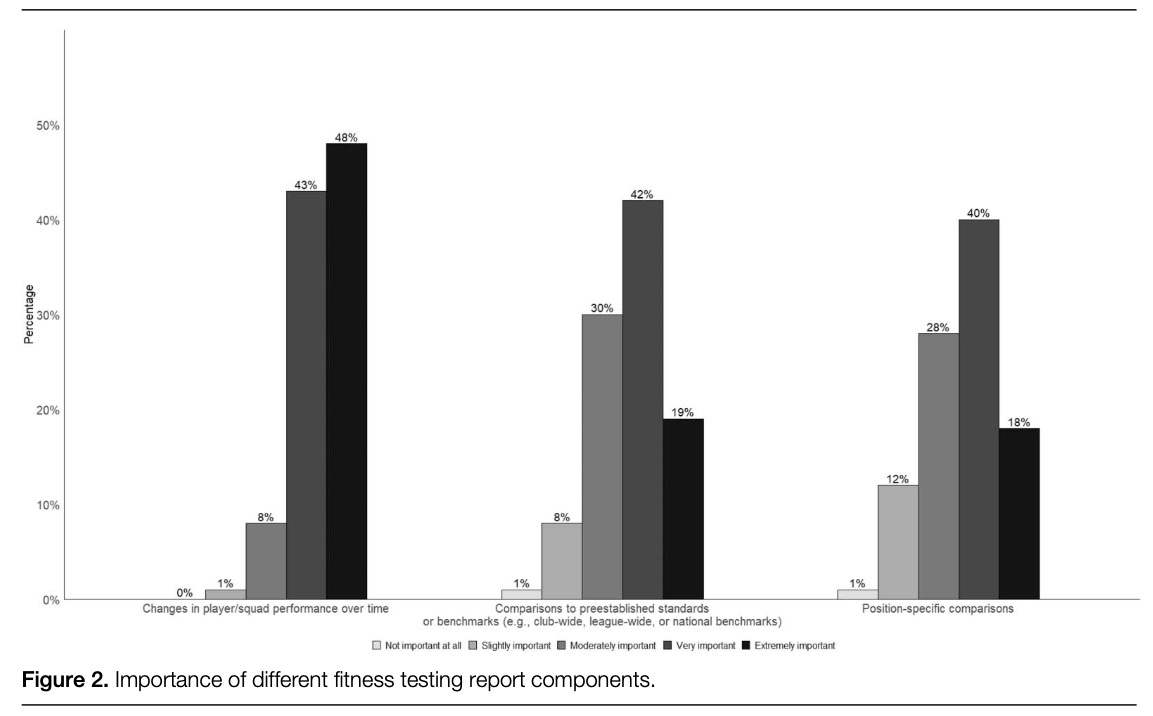

One of the most consistent findings from the study is the importance practitioners place on tracking changes in performance over time, with over 90% prioritising this approach ahead of benchmarking players against normative or positional standards

This is particularly relevant because many testing batteries are still designed around comparisons to external references, such as percentiles or positional averages, which can provide context but do not necessarily inform day to day decisions.

In applied settings, practitioners are more concerned with whether a player is improving, maintaining, or declining, as this directly influences training prescription, return to play decisions, and long term development strategies.

A single test result provides a snapshot, but trends provide direction.

If reporting systems fail to clearly highlight these trends, then they are unlikely to influence practice in any meaningful way

Individual and Team Perspectives Must Be Integrated

The study also highlights how practitioners view the balance between individual and team level data, with many valuing a combination of both rather than prioritising one exclusively

This reflects the complexity of football environments, where decisions are rarely made in isolation.

At the individual level, data is essential for tailoring training interventions, identifying strengths and weaknesses, and managing rehabilitation processes.

At the team level, data provides context around overall squad trends, physical status, and potential areas of concern that may not be visible when looking at individuals alone.

The key point is that effective reporting systems should not force a choice between individual and team perspectives, but instead allow practitioners to move seamlessly between the two, ensuring that decisions are both specific and contextualised.

Raw Data Remains Central to Practice

Despite advances in analytics and the increasing availability of complex metrics, raw data remains the most trusted and widely used format among practitioners, with measures such as sprint times, jump heights, and strength outputs forming the foundation of most performance decisions

This preference is largely driven by simplicity and transparency, as raw data is easy to interpret and requires minimal explanation, which is critical in fast paced environments where decisions often need to be made quickly.

However, this does not mean that more advanced metrics lack value.

Standardised scores can be useful for comparisons across players or time points, while composite scores can help summarise multiple variables into a single, more digestible metric for communication with coaches.

The key is not to replace raw data, but to complement it, ensuring that the level of complexity matches the needs of the end user.

Simplicity in Visualisation Enhances Decision Making

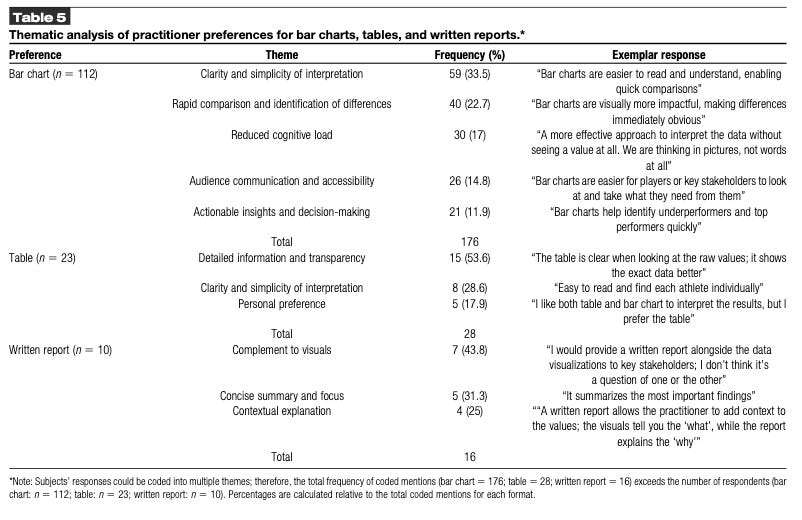

Another important takeaway from the study is how practitioners prefer data to be visualised, with a clear preference for simple and familiar formats that reduce cognitive load and allow for rapid interpretation.

Bar charts were commonly preferred for comparing players, as they provide a clear and intuitive representation of differences.

Line charts were favoured for tracking changes over time, allowing practitioners to quickly identify trends and patterns.

More complex visualisations, while potentially informative, were less consistently preferred, particularly when they required additional explanation.

In high performance environments, the speed of understanding is critical.

If a visualisation requires significant interpretation, it is less likely to be used effectively, regardless of how technically accurate it may be.

The Role of Uncertainty and the Risk of Overcomplication

The study also explored attitudes towards the inclusion of uncertainty measures, such as confidence intervals or error bars, which are often promoted as best practice within research settings.

However, only around half of practitioners viewed these positively, with many highlighting concerns around complexity and potential confusion, particularly for coaches or players who may not have a statistical background

This highlights an important tension within applied sport science.

While there is value in acknowledging uncertainty, this must be balanced against the need for clarity and usability.

Information that is technically correct but poorly understood has limited practical value.

As a result, practitioners need to consider how and when to include such information, potentially reserving more detailed statistical insights for internal discussions, while simplifying outputs for broader communication.

From Data Collection to Decision Influence

Ultimately, the most important message from this research is not about specific metrics or visualisation techniques, but about the purpose of fitness testing itself.

Testing should not be viewed as an isolated process of measurement, but as part of a broader system designed to inform and influence decisions.

This requires a shift in mindset, from focusing on what data can be collected, to focusing on how that data can be used.

It involves asking more targeted questions, such as what does this data actually tell us, how does it inform our next action, and how can it be communicated in a way that is immediately understood by others.

Because in practice, data only has value when it leads to action.

If it does not influence training design, player management, or decision making processes, then its impact is limited, regardless of its accuracy or sophistication.

Key Takeaways

The findings from this study provide clear guidance for practitioners working in football environments.

There is a need to prioritise tracking changes in performance over time, rather than relying solely on benchmarking against external standards.

There is value in integrating both individual and team level data to ensure decisions are both specific and contextualised.

Raw data should remain central to reporting, supported by more advanced metrics where appropriate.

Visualisations should be simple, clear, and designed to reduce cognitive load.

And most importantly, all reporting should be built with the end user in mind, ensuring that data is not only collected, but effectively communicated and applied.

Because in high performance environments, the value of data is not determined by how much is collected, but by how well it is understood and used.

Football Performance Network

If you want to make better decisions around fitness testing, data interpretation, and how testing actually informs your training process, this is exactly what we work on inside the Football Performance Network.

You will be learning alongside 70+ physical performance coaches and sport scientists working in professional football, sharing ideas, solving problems, and improving your day to day practice.

The next intake opens in July 2026.